In addition, numerous vendors have expanded their product portfolios with new features such as AI-assisted data processing, managed services to ensure regulatory compliance, and proactive support systems. This article provides an in-depth analysis of enterprise-grade AI data pipeline solutions, with a particular focus on Bright Data - a solution known for its comprehensive managed services, robust data acquisition infrastructure, and strong commitment to compliance and security.

What is an AI data pipeline?

The AI data pipeline is a set of end-to-end workflows: ingesting raw data, transforming it into machine learning model-learnable representations, training or fine-tuning the model, evaluating performance, and deploying it to production - while continuously monitoring data and model quality. Unlike traditional ETL/ELT pipelines, which focus only on moving data into a warehouse or BI layer, AI pipelines must also handle version management of data, code, and models; source data tracking; reproducible experiments; distributed training; online/offline feature storage; and automatic retraining triggered by drift or performance degradation.

AI Pipeline vs Legacy Data Pipeline

The traditional pipeline ingests raw data, performs SQL-based cleaning and aggregation, and then loads the results into the warehouse for use by the dashboard; once the task is complete, it is not started again until the next batch.

The AI pipeline starts the same way, but each dataset, feature, and model building block is immediately versioned. They run GPU-accelerated feature engineering, initiate distributed training, evaluate against fairness and accuracy thresholds, and deliver services at production scale. Production forecasts are transmitted back in real time, triggering automatic retraining when drift is detected, so the pipeline continues learning rather than ending.

| Dimension | Traditional data pipeline | AI data pipeline |

|---|---|---|

| Primary goal | Deliver clean, analytical data for reports and dashboards | Deliver high-quality features and continually optimize models |

| End users | Business Analyst, BI Tools | Data Scientist, Machine Learning Engineer, Reasoning Service |

| Data granularity | Aggregation, de-identification, historical data | Raw or Near-Raw Events, Time Series, Images, Audio |

| Transformation logic | SQL, deterministic rules | Feature Engineering: Statistical Transformation, Embedding, Data Enhancement |

| Compute model | Batch ETL/ELT; occasional microbatch | Batch + Streaming + GPU/TPU Training & Reasoning |

| Governance focus | Data quality, GDPR compliance | Data Quality + Model Fairness, Interpretability, Source Data, Model Registry |

| Version control | Dataset snapshot | Data, code, hyperparameters, model artifacts |

| Feedback loop | Manual QA and scheduled reloads | Automatic drift detection, retraining, A/B testing, shadow deployment |

| Typical tools | Airflow、dbt、Snowflake | Kubeflow、MLflow、Vertex AI、Feast、Ray、TFX |

1. Bright Data Managed Service

Bright Data Managed Services is a fully outsourced, enterprise-grade data acquisition solution that transforms public networks into clean, structured and compliant data sets without any engineering effort. Dedicated project managers first identify data sources, key metrics and delivery formats, and then Bright Data enables automated extraction at scale through its global proxy network of over 150 million real user IPs in 195 countries. Built-in deduplication, validation, and enhancement pipelines generate datasheets that can be used directly for analysis, and real-time dashboards and expert reports turn raw records into actionable insights. From thousands of rows to billions of rows, the service scales elastically, remains available 99.99% of the time, and is fully compliant with GDPR, CCPA, and site policies.

2. Rivery

Rivery is a zero-code, cloud-native AI data pipeline platform designed to deliver high-quality data in real-time to generative AI and rag applications. 200 + managed connectors sync structured and unstructured sources - databases, CRM, marketing suites, APIs - to Snowflake, BigQuery or any vector storage in minutes. Push-down SQL and inline Python conversion is responsible for cleaning, blocking and embedding content, Snowflake Cortex, Vertex AI and other vector-based destination millisecond storage vectors for retrieval. The visual orchestration layer triggers GenAI tasks as soon as the upstream data lands, while Rivery Copilot automatically generates new connectors or custom logic on demand, saving days of engineering time.

3. Snowflake

The Snowflake AI data pipeline is a zero-operation, end-to-end environment that transforms data directly from “raw state” to “AI ready” without any infrastructure tuning. Engineers can access any structured, semi-structured, or unstructured source - batch or stream - into an Apache Iceberg-based open lake warehouse and then convert using SQL, dbt projects, Snowpark Python, or pandas-level Modins. Built-in Cortex LLM and Document AI services complete the embedding, classification, summarization and translation in place, and inject the rag process of downstream agents and applications in real time. Git native DevOps, observable views, and metered elasticity allow teams to cut typical Spark costs by more than 50% while ensuring data SLAs.

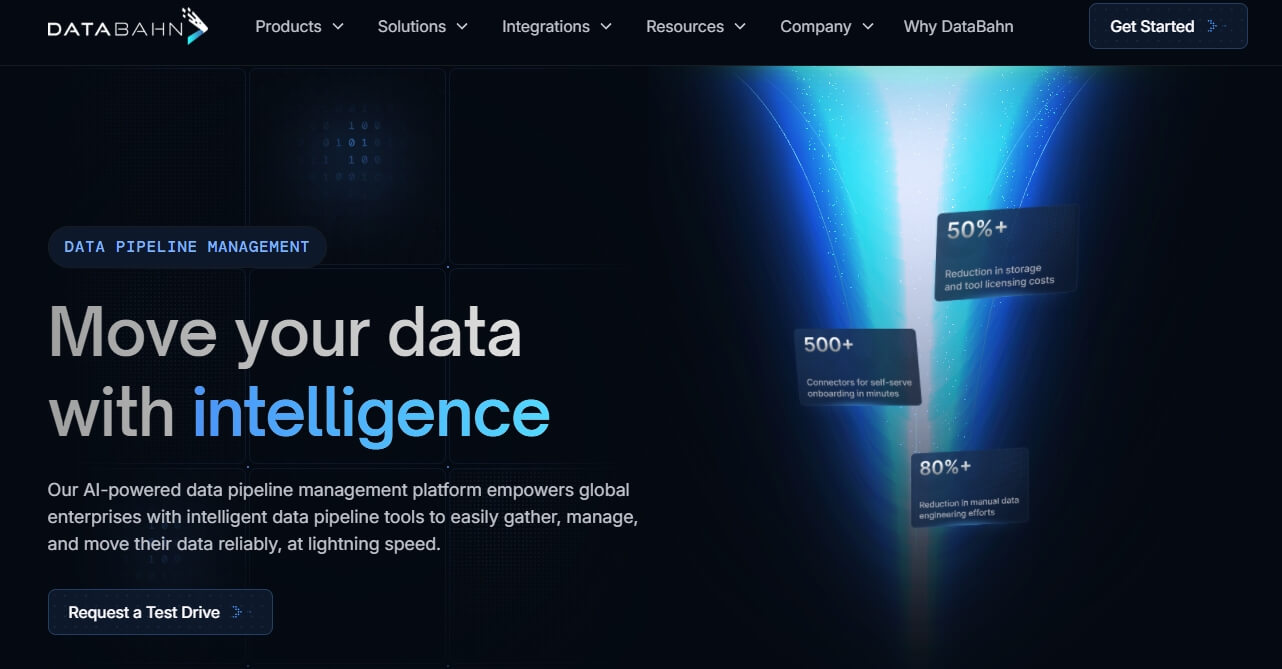

4. DataBahn

DataBahn provides an AI-native data pipeline management platform that transforms the entire telemetry lifecycle - from any source to any destination - into a continuous stream of governed insights. Its Smart Edge layer completes agentless acquisition and edge analysis, while Highway is responsible for AI-driven filtering, mode drift management and cost optimization. Cruz, the “boxed AI data engineer”, can parse, enrich and monitor the pipeline independently, completely saying goodbye to manual tuning. All data ends up in Reef - a scenario map database that correlates multi-source events and stays AI-ready. With 500 + plug-and-play integrations (covering cloud, on-premises and IoT/OT systems), DataBahn enables real-time visibility, significantly reduces Siem/storage costs (customers save $250,000- $350,000 per year), eliminates traffic in and out fees, and a zero-code interface allows non-technical users to get started in minutes.

5. Google Cloud Dataflow

Google Cloud Dataflow is a fully managed streaming and batch processing platform that instantly transforms real-time data into AI-ready intelligence. Built on the open-source Apache Beam, it ingests Pub/Sub, Kafka, CDC, clickstream or IoT events and enriches the stream with a GPU-accelerated MLTransform and RunInference using a Vertex AI, Gemini or Gemma model - all without managing a server. Autoscaling clusters can elastically scale from 0 to 4,000 worker nodes, processing petabytes of data; Dataflow diagnostic consoles can pinpoint bottlenecks, sample data, and forecast costs. Provisioned templates with Vertex AI Notebooks allow teams to launch secure, low-latency ETL, rag, or generative AI pipelines in minutes and write the results to BigQuery, Cloud Storage, or downstream apps in real time for personalized experiences, fraud detection, or threat response.

6. VAST

Vast Data replaces disparate tiers of storage with a single, AI-first operating system, eliminating the need to migrate data from raw ingestion to production-level training and inference. Based on the EB-level all-flash architecture, the platform ingests structured and unstructured data streams through multi-protocol NFS, SMB, S3 or GPU-direct paths, and completes real-time cleaning, quantification, embedding and rag enhancement within the database. Global namespaces combine zero-copy snapshots with immutable version control, allowing thousands of tenants to share the same logical pool while maintaining strict QoS and zero-trust isolation. Eventually, an integrated pipeline is formed that pushes the delay down to the microsecond level, continuously feeds the GPU, and significantly reduces TCO by eliminating duplicate copies across systems.

7. Fivetran Automated Data Movement

Fivetran delivers a fully managed, enterprise-grade data flow backbone that transforms 700 + SaaS, databases, ERP and file sources into high-value assets for analytics and AI in minutes. With zero-code connectors, automatic mode drift processing, and built-in change data capture, raw data is ingested, standardized, and written to a cloud data warehouse, lake, or vector store at petabyte scale. Hybrid deployment options allow teams to keep sensitive workloads local while reusing the same SOC 2/ISO 27001/GDPR/HIPAA certified pipeline. By eliminating engineering burdens, Fivetran significantly reduces insight time for real-time dashboards, machine learning features, and generative AI applications.

8. Azure Data Factory

Azure Data Factory (ADF) is Microsoft's fully managed, serverless data integration service that unifies on-premises, SaaS, and cloud data into one AI-ready pipeline. With drag-and-drop canvas or Git-driven CI/CD workflows, civilian integrators and professional developers alike can design ETL and ELT processes - ingesting SAP, Salesforce, Cosmos DB, rest APIs and more with 90 + built-in, maintenance-free connectors. The hosted Apache Spark engine automatically generates and optimizes transform code, intent-driven map acceleration mode alignment. The pipeline can send cleansed and enriched data directly to Azure Synapse Analytics, Azure ML or AI services for real-time business insights and model training, all protected by Microsoft's enterprise-grade security and 100 + compliance certification.

9. AWS Glue

AWS Glue is a fully managed, serverless data integration service that accelerates every step of the AI pipeline - from raw ingestion to model-ready datasets - without provisioning or tuning any infrastructure. The connector automatically discovers and catalogs metadata from 100 + AWS, local, and third-party sources; Glue Studio's visual ETL canvas or interactive Notebook allows engineers to design on-demand pipelines from GB to PB via Apache Spark or Ray. The built-in generative AI assistant automatically generates PySpark code, recommended mode evolution strategies, and provides root cause fixes for job failures, reducing development cycles from days to minutes. After deep integration with the new generation of Amazon SageMaker, Glue will directly stream cleaned and enriched data into feature storage, vector databases and training clusters to achieve real-time experiments and continuous retraining.

10. Apache Airflow

Apache Airflow is an open source orchestration engine that translates Python code directly into production-grade AI data pipelines. Workflows are defined in pure Python Dag and support dynamic task generation, looping, and branching, making it easy to cover the complex machine learning lifecycle - feature extraction, model training, hyperparameter tuning, and batch reasoning. The message queue-based backend allows the scheduler to scale horizontally to thousands of concurrent workers, and the modern web UI displays task logs, retries, and SLAs in real time. The rich Operator ecosystem connects ingestion, transformation, model deployment, and monitoring steps seamlessly with Google Cloud, AWS, Azure, Snowflake, Spark, Kubernetes, and more right out of the box. Everything is in code, and the team can version control, test and reuse the pipeline like managing ordinary software to accelerate the experimentation and continuous delivery of AI services.

11. Estuary

Estuary Flow is a cloud-native real-time data integration platform built for continuous delivery of up-to-date, unified data to AI and Retrieval Augmented Generation (rag) applications. With low-latency CDC and streaming, Flow synchronizes Salesforce, HubSpot, Postgres, Kafka, and other sources in real time, and instantly cleans, enriches, and evolves patterns through declarative SQL/TypeScript conversions. The results can be objectified directly to Pinecone, Snowflake and other vectors in a sub-second window to ensure that the model always retrieves the latest context. Built-in backpressure processing and precise one-time semantics allow Flow to elastically scale from MB level to TB level without the burden of operation and maintenance, allowing data scientists to focus on improving model accuracy rather than underlying engineering.

12. Snowplow

Snowplow provides a real-time, highly scalable pipeline of behavioral data designed to transform raw customer interactions into AI-ready datasets. With 35 + first-party trackers and webhooks, it captures fine-grained events from web, mobile, IoT, gaming, and AI agents, automatically appending 130 + context attributes to each event and pattern-checking during transmission. In-stream enrichment - PII pseudonymization, bot detection, channel attribution - can be run in real time via JavaScript, SQL or API, with low latency in compliance with GDPR, CCPA and HIPAA. Unified event tables land directly on streaming receivers such as Snowflake, Databricks, BigQuery, S3 or Kafka, Pub/Sub, eliminating multi-table associations and accelerating downstream ML and rag workloads. Enterprises can choose Snowplow hosted or private hosted clouds deployed on AWS, GCP, Azure for enterprise-grade security and SLA protection.

Conclusion

Enterprise-grade AI data pipelines are critical to unlocking the full potential of AI-driven operations. A robust pipeline not only ensures the timely and secure flow of data, but also provides insights that can be landed to drive business innovation. A comparative assessment of leading solutions shows that while many platforms have strengths in data integration, support, and scalability,

While many offerings excel in specific areas, Bright Data's managed services - with strong integration capabilities, proactive support, and a comprehensive security framework - make it the first choice for enterprises to build efficient, reliable, and future-proof AI data pipelines.