AI-powered web crawler marks a paradigm shift in data scraping technology, integrating machine learning, natural language processing (NLP), and computer vision to dynamically adapt to web page structure, JavaScript-rendered content, and anti-crawling mechanisms. Different from traditional crawlers based on static rules, intelligent crawlers can process large-scale heterogeneous network data with higher accuracy through DOM tree analysis, site-specific parsing achieved by transfer learning, and agent rotation strategy based on reinforcement learning. Such systems are particularly good at handling dynamically loaded content, bypassing captchas, and evading anti-crawler detection through behavioral simulation techniques.

1. Bright Data

Brightdata is one of the top companies providing AI-driven web scraping tools, which can effectively reduce your data collection pressure. Bright Data's technology gives you access to dedicated endpoints to easily extract structured web data from 120 popular domain names.

With BrightData’s solution, you have the option of scraping using an API or a code scraper. What's more, you only pay for successfully delivered results and get your data in the format of your preference and choice. With the Web Scraping API, you can easily use the interface to build API requests, build schedulers to control data delivery frequency, and easily deliver and download data to your preferred storage location. On the other hand, with codeless scrapers, everything is done within the control panel so you can easily control the scraper and download the data results through the control panel.

You can also enjoy features like custom headers, captcha solver, user agent rotation, automatic IP rotation, JavaScript rendering, and more. Additionally, you can get structured data in JSON, NDJSON or CSV format via webhook or API delivery. Through Brightdata, you also have access to over 150 million real user IPs from over 195 countries. You can also choose to use customized APIs for business, finance, social media, real estate, and more.

Function

price plan

2. BrowseAI

BrowseAI is another great website with a no-code interface for creating crawler bots that recognize changes in content type and web page structure. In addition to this, it supports API and webhook automation. You can easily train an AI bot to extract structured data from the website of your choice and seamlessly integrate it into other tools.

What’s even more exciting about using BrowseAI is that you don’t even need any technical experience. This AI-powered web scraper easily extracts the same data set from thousands of pages and transforms web data into structured data sets that you can easily analyze, export, or integrate.

You can set up monitoring to get notifications of element changes even if the AI web scraper detects site changes. Additionally, you can easily capture visual data that text extraction cannot provide. Ideally, you can use the data you collect to train large language models (LLM), machine learning (ML), or artificial intelligence (AI). At the same time, there are no restrictions on how you can collect data for competitor analysis, market intelligence, and more.

also supports advanced technical features such as automatic retry, smart rate limiting, proxy management and error recovery to ensure smooth data extraction. You can also easily customize your data extraction by various parameters such as search terms, date range, or location.

Function

price plan

3. Crawl4AI

Crawl4AI is an ideal tool for extracting web data from forums and blogs. It uses large language models (LLM) to dynamically parse web pages, thereby effectively reducing maintenance costs. Crawl4AI is a GitHub open source project and therefore completely free and open to the public.

This is an excellent AI-powered crawler tool with superior speed and accuracy in data extraction. You can easily extract data from different industry segments to meet personalized usage needs. This tool is very friendly to large language models and can provide structured text, images and metadata for direct use by AI models. Its documentation provides a detailed guide to getting started.

Function

price plan

4. FireCrawl

Firecrawl is another efficient AI web crawling platform that supports deep crawling of websites and outputs them in Markdown format for seamless integration with large language models (LLM). It also works perfectly with LangChain. With this AI-driven web scraping tool, you can crawl all pages of your website in real time and get the data you need.

You can also easily search the web to get the content you need from any industry. Firecrawl integrates with existing mainstream tools and workflows to ensure you can complete your tasks with ease. Its AI web crawler waits for content to finish loading, thereby increasing crawling speed.

In addition, you can perform various operations, such as scrolling the page until you find the content you want to collect. FireCrawl is designed to scale with your needs, allowing you to personalize it based on your current needs and target industries.

Function

price plan

5. Nimbleway

Nimbleway is one of the best proxy service providers and also provides AI-driven web scraping tools. With this tool, you can easily collect any data you need without worrying about IP blocking, geo-restrictions or captcha issues. Nimble AI Browser gives you total protection!

In addition, web page data can be collected through a simple REST API, without the need for other infrastructure to complete the crawling task. It controls the entire data collection process, you just send an API call containing the target URL, and the required data is sent directly to your cloud storage. Easily obtain various data on e-commerce, search engine results pages (SERP), social media, tourism, etc.

Function

price plan

6. Zyte

Zyte also provides AI-driven web scraping tools, allowing you to easily obtain the data you need. This AI crawler automatically adapts to website changes to ensure you have a smooth experience.

With Zyte, you can easily automate clicks, inputs, and scrolling. Get multiple types of content including sentiment analysis, data comparisons, and content summaries. Zyte's AI crawler will only crawl the content actually displayed on the page, ensuring greater accuracy.

Additionally, with Generate Mode, you can create data points based on page content. Automatic extraction can be done via browser request or HTTP request.

Function

price plan

7. ScrapingBee

ScrapingBee is another reliable platform that provides AI web scraping API. You don't need to do it manually, the AI-powered crawler does the task automatically. With data extraction, you get clean JSON output, and the crawler automatically adapts to page changes. Easily crawl e-commerce data, extract email and contact information, summarize and aggregate news content.

combines high-quality proxy and advanced headless browser technology, which can easily bypass anti-crawler mechanisms. Just make an API request and you'll get the data you need instantly. In addition, it also provides a screenshot function, which can not only obtain HTML but also obtain website screenshots. Don’t worry if you don’t have programming skills.

Function

price plan

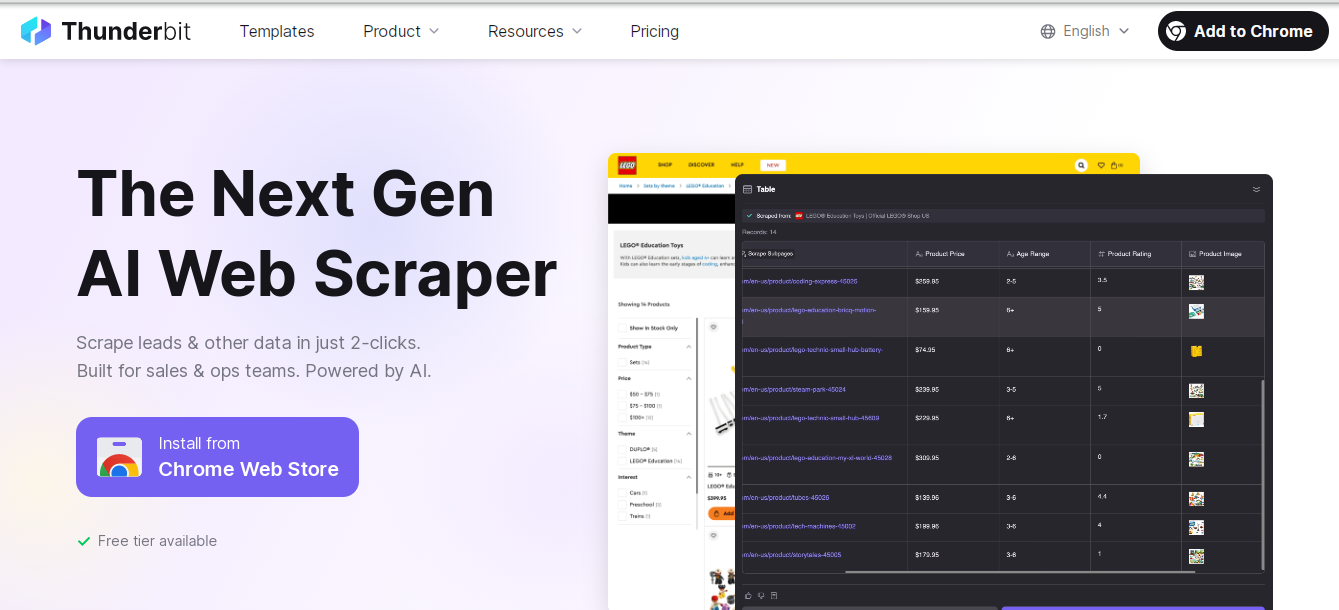

8. Thunderbit

Thunderbit provides a reliable AI web scraping tool that makes data collection simple and easy to use. With over 30,000 users, Thunderbit is a trustworthy platform. You can extract various data such as emails, phone numbers, product details, YouTube tags, YouTube transcripts, AI sales email generation, AI email title generation, Amazon review export, TikTok hashtag generation, Amazon products, Instagram hashtag generation, YouTube tags, and more.

This AI-powered crawler intelligently identifies important data and creates column names based on your needs. It automatically filters out irrelevant information, allowing you to focus on critical data. It can accurately identify and extract key information in documents. Thunderbit's interface requires no programming knowledge, you just define the column names and the AI will understand what you want to crawl.

Function

price plan

The End

As the Internet evolves towards a dynamic and strong anti-crawling architecture, AI crawlers have become a key tool for enterprises to extract information from unstructured data sources. By integrating the Transformer model to achieve semantic understanding, clustering algorithms to identify page templates, and adversarial training to break through WAF protection, these systems continue to expand the boundaries of automated data collection. But at the same time, you also need to follow crawler ethics - including rate limits, robots.txt protocol compliance and legal frameworks, and find a balance between technological innovation and responsible data collection.